The year is 2035. You’re standing on a factory floor overseeing a fleet of 300 humanoid robots, each one silently and efficiently going about its business. There’s just one slight snag: you’ve got 300 individual remote controls, and your plan to kitbash them into a giant, functional mech suit has already been vetoed by HR. The sheer logistical faff of managing a massive robotic workforce remains one of the most significant—if decidedly unglamorous—hurdles to our automated future. But what if you could skip the joysticks entirely? What if you could simply… think, and the robots obeyed?

This isn’t the opening gambit of a dystopian thriller; it’s the very real problem a new open-source project called Kinexus is looking to crack. In a world increasingly obsessed with invasive brain-computer interfaces (BCIs) like Neuralink, Kinexus is taking a more grounded, “plug-and-play” approach. It uses a non-invasive EEG headset to translate a user’s thoughts and vocal commands into actionable tasks for a legion of humanoids. It’s less about surgical implants and more about building a practical, scalable bridge between the human mind and the industrial workforce.

The Scaling Headache of Robot Control

As factories and warehouses scramble to deploy humanoid robots, they’re hitting a massive operational wall. The one-to-one model—one operator for every robot—simply doesn’t scale. Current control methods usually involve labyrinthine software interfaces or cumbersome “teach pendants” that require direct, manual programming for every single unit. It’s a massive bottleneck that threatens to stifle the very efficiency these robots are supposed to provide. Managing a handful of bots is tricky enough; managing hundreds is a total logistical nightmare.

This is where the need for a centralised, intuitive command centre becomes paramount. The industry is crying out for a “control plane” for its physical assets—a way for a single human supervisor to orchestrate an entire fleet without breaking a sweat. Kinexus suggests that the most natural user interface is the one we’ve been using since birth: the human brain.

Kinexus: Your Mind as the Master Dashboard

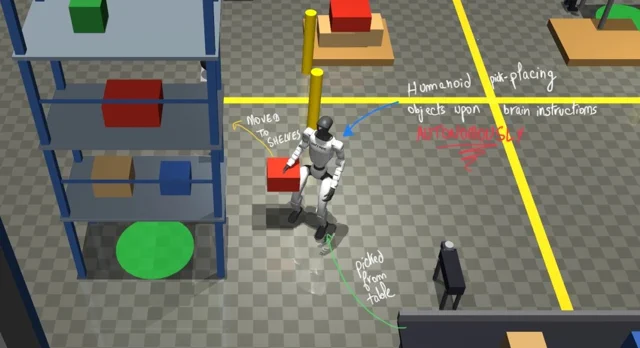

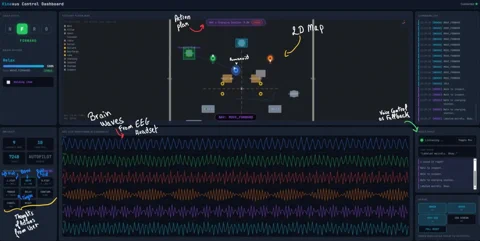

At its heart, Kinexus is a control dashboard that functions as a real-time interpreter between you and your robot army. Developed by AI specialist Mourad Ouazmour and written primarily in Python, the system is designed to act as the central nervous system for factory automation. It visualises incoming brain signals from an off-the-shelf EEG headset, translates them into discrete commands, and maps the entire factory floor for total situational awareness.

The control scheme is refreshingly direct. According to the developer, a user might clench their right fist to make a robot pivot right, clench both to command a forward march, or even tap their tongue to switch operational modes. The dashboard brings this to life via:

- EEG Live Waveform: A real-time feed of your brain’s electrical chatter, split into various channels.

- Methods Panel: The “translation engine,” where specific mental cues—like imagining a physical movement—are mapped to robot actions such as “MOVE_LEFT” or “MOVE_FORWARD.”

- Factory Floor Map: A 2D schematic showing exactly where every humanoid is, what they’re doing, and what they’re planning to do next.

- Voice Fallback: For more complex, autonomous tasks, the system steps back from direct “telepathy.” A supervisor can simply say, “grab that crate from the conveyor and move it to Pallet 2,” and the designated robot will autonomously navigate and execute the job.

From Cyberpunk Ambition to GitHub Reality

While the idea of controlling robots via non-invasive EEG isn’t brand new, applying it to fleet management is where Kinexus gets interesting. Historically, EEG research has focused on assistive tech for individuals with disabilities or controlling single units, with accuracy for simple tasks hovering between 70% and 90%. Kinexus wants to take that tech out of the sterile lab environment and drop it straight onto the factory floor.

Perhaps the most significant move is the decision to host Kinexus as an open-source project on GitHub. By doing so, it democratises access to a high-end control paradigm. This isn’t a proprietary, “walled-garden” product from a Silicon Valley titan; it’s a toolkit for anyone to tinker with, improve, or integrate with hardware like the OpenBCI platform. It invites a global community to solve the classic EEG headaches, such as signal noise and the need for bespoke user calibration.

Admittedly, the road from a GitHub repo to a mind-controlled Gigafactory is a long one. Non-invasive EEG lacks the high-fidelity resolution of its invasive cousins, and hitting the 99.9% reliability mark required for heavy industry is a monumental task. But Kinexus isn’t just selling a finished product; it’s pitching a bolshy, brilliant idea. It points to a future where human oversight in automated spaces is less about frantic button-mashing and more about focused, strategic intent. For now, it’s a compelling glimpse into a world where managing a hundred robots might be as effortless as a passing thought.