Just when you thought the AI hype cycle couldn’t get any more surreal, a biotech outfit from Melbourne has decided to bin the GPUs and plug an AI directly into a living, biological brain. Well, in a manner of speaking. Cortical Labs, the firm that famously taught a petri dish of 800,000 human neurons to play a decent game of Pong, has moved on to rather more hellish pursuits. After successfully training a fresh batch of 200,000 neurons to navigate the demon-infested corridors of DOOM, they’ve now wired their “DishBrain” into a Large Language Model (LLM).

That’s right. Actual, living human brain cells, firing electrical impulses atop a silicon chip, are now the ones choosing the words an AI speaks. This isn’t just another incremental tweak to machine learning; it’s a bizarre, brilliant, and slightly unsettling leap into the realm of “wetware” and biological computing. Frankly, it makes your average chatbot look about as sophisticated as a pocket calculator.

From Pixelated Paddles to Hellish Landscapes

To grasp how we reached a point where brain cells are effectively co-authoring text, we have to look back at Cortical Labs’ greatest hits. In 2022, the team made global headlines with their “DishBrain” experiment. They grew neurons on a microelectrode array capable of both stimulating the cells and reading their activity. By sending electrical signals to indicate the position of the ball in Pong, the neurons learned to fire in a way that controlled the paddle, demonstrating goal-directed learning in a mere five minutes. It was a staggering proof-of-concept for synthetic biological intelligence.

But Pong is easy mode. In the tech world, there is an ancient, unwritten law for judging new hardware: “Can it run DOOM?” Naturally, Cortical Labs took the bait. The jump from the flat, 2D world of Pong to the 3D environment of DOOM is a massive step up, requiring spatial navigation, threat detection, and split-second decision-making. Yet, the neurons rose to the occasion. The game’s video feed was translated into patterns of electrical stimulation, and the neurons’ responses were decoded into in-game actions like strafing and shooting. While the performance was more “clumsy amateur” than “pro gamer,” it proved the system could handle incredibly complex, dynamic tasks.

Giving an LLM a Biological Ghost in the Machine

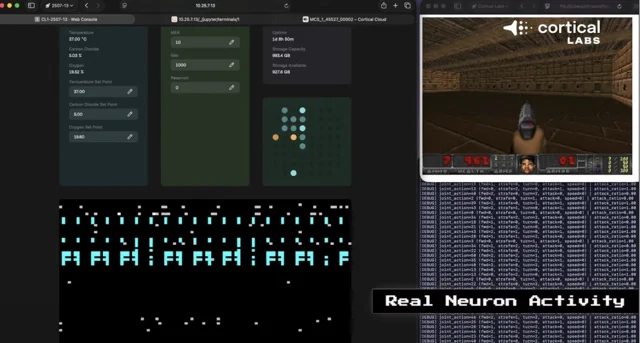

Having conquered the shareware classics, the next logical step was apparently to give the neurons a voice. The latest experiment, highlighted by tech luminaries like Robert Scoble, reveals the brain cells interfaced directly with an LLM. Instead of moving a paddle or a Space Marine, the electrical impulses fired by the neurons are now being used to select each token—the bits of words or characters—that the AI generates.

A first-look video shows the process in action: a grid displays the channels being stimulated and the corresponding feedback from the neurons as they collectively “decide” on the next piece of text. It is a raw, unfiltered look at biological matter performing a cognitive task that, until now, has been the exclusive domain of power-hungry algorithms running on massive server farms.

“We have shown we can interact with living biological neurons in such a way that compels them to modify their activity, leading to something that resembles intelligence,” stated Dr Brett Kagan, Chief Scientific Officer of Cortical Labs, regarding their earlier work.

This new development takes that interaction to a whole new level. It’s one thing to react to a bouncing ball; it’s another entirely to participate in the fundamental construction of language.

Why Bother With Brains?

At this point, you might be wondering: why go through the immense hassle of keeping 200,000 neurons alive in a dish when a high-end NVIDIA chip can run an LLM perfectly well? The answer lies in efficiency and the fundamental limits of silicon. The human brain performs mind-boggling computations on about 20 watts of power—roughly the same as a dim lightbulb. A supercomputer attempting to simulate that same level of activity would require millions of times more energy.

Cortical Labs and others in the field are betting that this incredible energy efficiency can be harnessed. Biological systems excel at parallel processing and adaptive learning in ways that traditional, deterministic computers often struggle to replicate. By merging living neurons with silicon, they are creating a hybrid computing architecture that could one day power systems that learn faster and consume a fraction of the electricity.

This isn’t just about building a more eccentric chatbot. The team at Cortical Labs, led by CEO Dr Hon Weng Chong, envisions a future where this technology revolutionises robotics, personalised medicine, and drug discovery. Imagine a robot that doesn’t just follow pre-programmed scripts but learns and adapts to a new environment with the fluid intelligence of a living organism. Or consider using a patient’s own neurons on a chip to test the efficacy of new treatments for neurological conditions like epilepsy.

The road ahead is long, and biological systems are notoriously temperamental compared to the reliable consistency of silicon. But as Cortical Labs has shown, a cluster of cells in a dish has already graduated from video games to conversation. The prospect of these same neurons one day controlling a physical robot is no longer the stuff of science fiction—it’s the next item on the roadmap. And that is a thought that is simultaneously terrifying and exhilarating.