Let’s be honest: most robot demos are a meticulously choreographed ballet of disappointment. They are usually a slow-motion car crash of clunky movements that make you wonder if the heat death of the universe will arrive before the machine actually finishes the job. But every so often, something cuts through the static. Today, that something is Generalist’s new AI model, GEN-1. The company is making some borderline audacious claims: a general-purpose AI brain for robots that doesn’t just “sort of” work—it absolutely nails it.

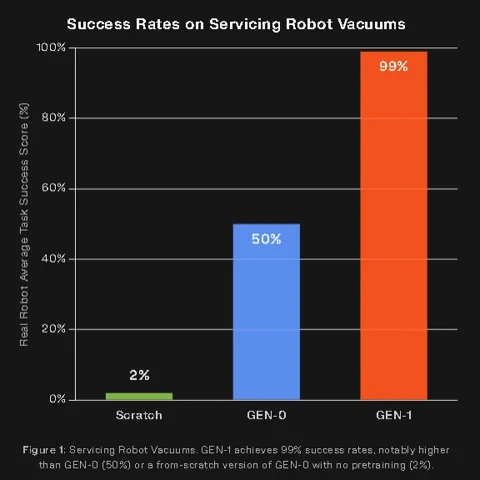

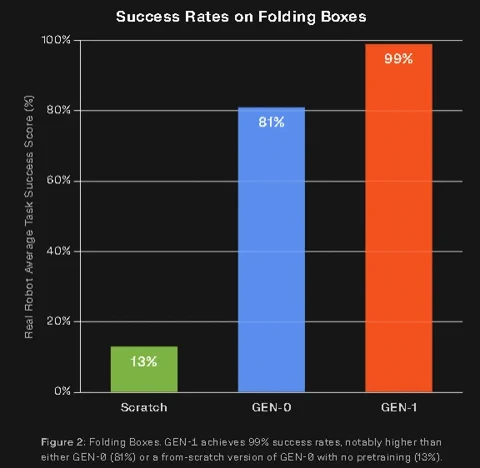

Generalist is pitching GEN-1 as the first model to truly “master” fundamental physical tasks, and they’ve brought the data to back it up. We’re talking average success rates of 99% on tasks where its predecessor, GEN-0, was languishing with a mediocre 64%. It’s also completing tasks up to three times faster than the previous state-of-the-art and, most crucially, it can learn an entirely new trick with only about an hour of robot-specific data. This isn’t just a software patch; it’s a genuine phase shift towards robots that are actually, finally, commercially viable.

From Scaling Laws to Physical Mastery

Just five months ago, Generalist introduced GEN-0, a model that provided the first real evidence that the “scaling laws”—the same logic that fuelled the meteoric rise of LLMs like GPT—could also be applied to robotics. More data and more compute led to predictably better, more generalised performance. It was a brilliant academic point, but GEN-0 wasn’t quite ready for the big leagues.

GEN-1 is the result of cranking those dials all the way up. It’s been scaled on a massive dataset—now exceeding half a million hours of high-fidelity physical interaction data—and accelerated by new algorithmic breakthroughs. The real kicker, however, is the data source itself. Instead of relying solely on expensive and sluggish teleoperation datasets, the foundation of GEN-1 is built on data from low-cost wearable devices worn by humans. This provides a rich library of real-world physics and those intuitive micro-corrections that we humans do instinctively, but which simulations often miss entirely.

“We believe GEN-1 is the first general physical AI model to cross a key threshold: unlocking commercial viability across a broad range of tasks,” the company stated in its announcement.

The Holy Trinity: Reliability, Speed, and Improvisation

Generalist defines “mastery” as a combination of three key capabilities. Two of these have been the bedrock of industrial automation for sixty years, but it’s the third one that changes the game.

Reliability and Speed: The Industrial Baseline, Supercharged

First, the numbers are simply staggering. In long-duration endurance tests, GEN-1 packed blocks over 1,800 times in a row without a hiccup, folded boxes over 200 times, and even serviced a robot vacuum cleaner over 200 times in a row—a robot maintaining another robot, which is either an engineering dream or the opening scene of a very specific horror film. These tasks ran for hours without human intervention at a 99% success rate.

Then there’s the speed. Robots powered by GEN-1 can assemble a box in 12.1 seconds, a task that took its predecessor around 34 seconds. Packing a phone into a case is done in 15.5 seconds, 2.8 times faster than before. This isn’t just a matter of cranking up motor speeds; the model learns from experience and leverages advanced inference techniques to perform tasks more efficiently than the human demonstrations it originally learned from.

Improvisation: The Spark of Intelligence

Reliability and speed are the bread and butter of industrial arms bolted to a factory floor. What they lack is the ability to handle the universe’s persistent refusal to stick to the script. This is where GEN-1’s “improvisational intelligence” comes in.

Generalist describes this as an emergent capability, a form of “freestyle problem-solving.” In one demo, a robot kitting automotive parts accidentally bumps a washer. Instead of freezing or failing, the GEN-1 powered system assesses the situation and adapts. It might set the washer down to regrasp it cleanly, or cleverly use the edge of a slot to reorient the piece, or even bring in its other hand for a bimanual assist. These aren’t pre-programmed recovery routines; they are novel solutions generated on the fly, well outside the training data. This is the difference between simple automation and true autonomy.

More Than a Model, It’s a System

It’s crucial to understand that GEN-1 isn’t merely a set of model weights. It’s a complete system that includes innovations in pre-training, post-training techniques, and inference-time processing. This system-level approach is what makes it so data-efficient, capable of adapting to a new robot body and a new task simultaneously with about an hour of fresh data.

Of course, GEN-1 isn’t a magic wand for physical AGI. The company is quick to point out its limitations. Not all tasks achieve that 99%+ success rate, and some industrial applications demand even higher reliability. Furthermore, emergent improvisation raises the critical question of AI alignment. A robot that can creatively solve a problem is fantastic, but you also need to ensure its “creative” solutions don’t involve, say, smashing a hole in a wall for the sake of efficiency.

Still, the launch of GEN-1 feels like a significant milestone. It strengthens the argument that scaling models with vast amounts of real-world physical interaction data is the most promising path toward generalist robots. By focusing on a trifecta of performance—doing the task right, doing it fast, and knowing what to do when things go pear-shaped—Generalist may have just dragged the dream of the useful, general-purpose robot one giant leap closer to reality. For us, that’s more than just a model; it’s a sign that the physical world is finally about to get a whole lot smarter.