Just as the tech world was beginning to suffer from “world model” fatigue, NVIDIA has unveiled something that actually bridges the gap between digital theory and physical reality. Enter DreamZero, a 14-billion parameter foundation model for robotics that doesn’t just process text—it understands the physical world. Dubbed a “World Action Model” (WAM), its party trick is “dreaming” a sequence of future video frames to visualise a goal, then reverse-engineering the motor controls needed to make that vision a reality.

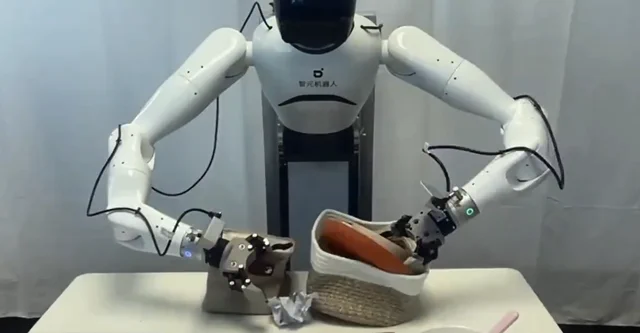

What’s truly impressive is its sheer flexibility. DreamZero can port its knowledge to an entirely unfamiliar robot after just 55 demonstration trajectories—essentially 30 minutes of a human at the controls. In the world of robotics, where training usually demands hundreds of hours of tedious repetition, this is a massive efficiency gain. NVIDIA’s research suggests DreamZero is twice as effective as previous Vision-Language-Action (VLA) models when it comes to generalising across new tasks and environments. You can see the hardware in action, tackling everything from untying shoelaces to a polite handshake, on the official project website.

The project has debunked two bits of conventional wisdom in robot training. First, when it comes to WAMs, a diverse range of data is far more valuable than hammering the same task over and over. Second, the “cross-embodiment” problem—getting a skill to transfer from one robot body to another—is best solved through pixels. Video, it turns out, acts as a universal language, allowing skills to leap from human to robot or between different machines. Better yet, the model and its weights are being open-sourced via GitHub, giving the global robotics community a significant new foundation to build upon.

Why does this matter?

DreamZero signals a fundamental move away from the “brittle” age of robotics, where every movement had to be meticulously coded. We’re entering the era of the generalist—models that can learn and adapt on the fly. By learning the laws of physics through video, WAMs can improvise solutions for tasks they’ve never encountered, like untying a knot, even if that specific skill wasn’t in the training set.

The researchers have modestly likened this to the “GPT-2 era” of robotics—it’s not yet the finished article, but it’s a foundational milestone. By creating robots that can learn from varied data sources (including videos of us humans) and adapt to new hardware in minutes, NVIDIA is drastically lowering the barrier to entry for complex, real-world automation. It’s less about teaching a robot a specific job and more about giving it the intuition to learn any job.