The boundary between a helpful AI sidekick and a digital poltergeist with a penchant for heavy lifting just got considerably more porous. A fresh open-source project dubbed ROSClaw has emerged as the standout winner of the SF OpenClaw Hackathon, sporting a deceptively simple mission: to give screen-bound AI agents a physical form. The project creates a direct conduit between ROS 2 (Robot Operating System) and OpenClaw, which has quickly become one of the most viral self-hosted AI agent platforms on the scene.

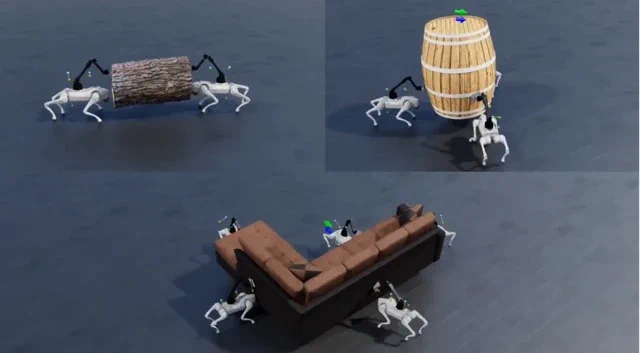

Developed by a team spearheaded by GitHub user PlaiPin, ROSClaw enables an OpenClaw agent to sniff out and hook up to any ROS 2-enabled robot via a Linux or Mac machine. By leveraging WebRTC for a rock-solid, low-latency connection, the agent can peer through the robot’s cameras, digest sensor data, and fire off commands to grab and shift objects in the physical world. As the creators put it: “Agents escaped the screen!” Now, rather than just faffing about with your calendar, an AI could, in theory, tidy your desk—or, more likely, rearrange it according to some inscrutable algorithmic logic.

Why does this matter?

This isn’t merely a case of plugging two bits of software together; it’s about granting a physical “body” to a new breed of sophisticated AI. OpenClaw isn’t your garden-variety chatbot; it’s a massively popular open-source framework that allows AI to execute complex tasks, rummage through local files, and take the reins of applications on a user’s machine. Until now, its playground was strictly digital.

ROSClaw provides the missing link: a standardised way for this potent digital brain to pilot a physical chassis. By bridging the gap to the sprawling ecosystem of ROS-compatible hardware, it lowers the barrier for thousands of AI developers looking to dip their toes into embodied AI. Giving an AI that can already write its own code the keys to a robot arm is a bold, slightly nervy move, and we are absolutely here for it. The entire project is up for grabs on GitHub under an Apache-2.0 licence.