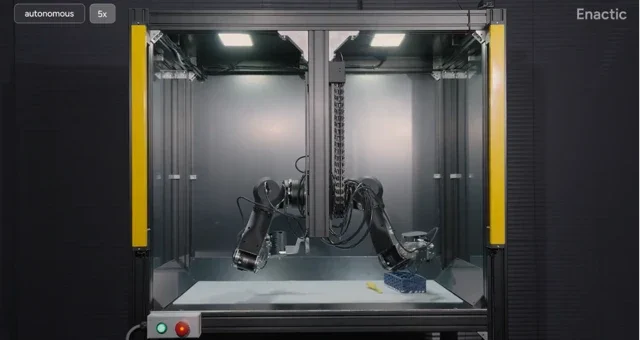

We’ve all been there: watching a jaw-dropping robot demo that looks more like a Hollywood special effect than a rigorous scientific breakthrough, only to find it’s impossible to replicate. You aren’t the only one feeling a bit cynical. The robotics world has a nagging issue—what works in one lab is often a total non-starter in another, usually thanks to bespoke hardware and highly specific testing environments. Enactic AI is stepping up to the plate to fix this with the OpenArm 02, a fully open-source dual-arm platform built specifically to make evaluation reproducible.

The concept is refreshingly straightforward: standardise the physical hardware so that research results can actually be compared across different institutions without the usual “it works on my machine” caveats. The OpenArm 02 is a modular, 7-DOF (degrees of freedom) humanoid arm system designed to give researchers a common baseline. It’s paired with two rather clever bits of kit: the OpenArm KER, a lightweight wearable for low-latency data acquisition, and AutoEval, a framework that allows for a 24/7 real-world evaluation loop with minimal human oversight. This means no more poor PhD students spending their weekends manually resetting tasks; policies can now be tested around the clock under identical conditions.

This isn’t just a fancy CAD drawing; it’s a full-blown ecosystem. Everything from the electronics to the firmware is open-source, with native ROS 2 support baked in. With a payload capacity of 4.1 kg and back-drivable actuators ensuring safe human interaction, it’s a serious piece of kit ready for the lab right out of the box.

Why does this matter?

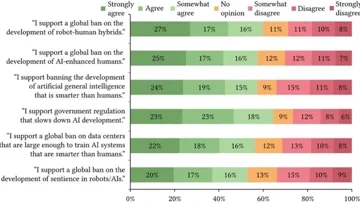

The “reproducibility crisis” is a well-known thorn in the side of modern science, and robotics is particularly susceptible. When over 70% of researchers reportedly struggle to replicate their peers’ experiments, progress stalls. By providing a capable, standardised hardware platform, Enactic AI is offering the community a common language. It moves the needle from flashy, one-off demos towards genuine, comparable benchmarks—essentially building the foundations for a faster, more collaborative future where new algorithms can be tested on a truly level playing field.