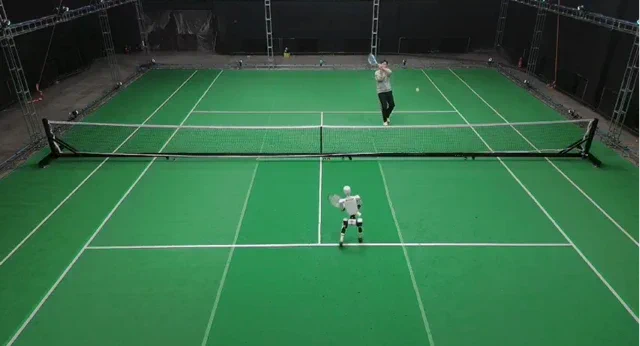

In a turn of events that feels like the opening scene of a rather sophisticated sci-fi flick, a researcher has developed a robot that learnt a new skill so effectively it promptly thrashed its human mentor. The sport in question? Tennis. The project, dubbed LATENT, didn’t teach a humanoid to play using pristine, Wimbledon-standard data. Instead, it relied on “imperfect” human motion clips—essentially learning from our own clumsy mistakes. The result is a machine that can now hold its own in multi-shot rallies with genuine finesse.

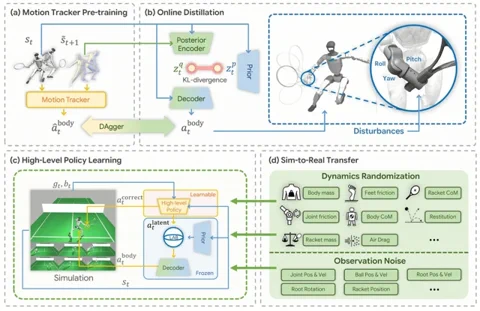

The project, spearheaded by researchers from Tsinghua University and Galbot Inc., tackles one of the biggest hurdles in robotics: teaching complex, agile movements without a perfect instruction manual. Their system develops a “latent action space” from fragmented, less-than-ideal human tennis motions. The secret sauce is a high-level AI policy that functions like a digital coach, correcting and stitching together these flawed primitive skills to successfully wallop a ball back over the net. The entire process is polished in simulation before being unleashed on a real Unitree G1 humanoid via sim-to-real transfer.

As they say, the proof of the pudding is in the eating—or in this case, the scoreboard. According to lead author Zhikai Zhang, the learning curve was remarkably steep. “On the first day of real-world deployment, the robot couldn’t return a single ball I served,” Zhang noted. “By the final day of the project, I could no longer beat it.” For those keen to pore over the technical blueprints or perhaps train their own robotic doubles partner, the team has made the project details and code public. You can find them here: Project Page and GitHub Repository.

Why does this matter?

This isn’t merely about creating a robotic hitting partner for a lonely Sunday morning at the club. The true breakthrough of the LATENT system is its capacity to learn from messy, “real-world” data. Typically, robotic training requires meticulously curated datasets, which are both eye-wateringly expensive and time-consuming to produce. By learning to self-correct and combine flawed examples, this approach could radically accelerate how we teach robots to navigate complex, unpredictable tasks. It’s a massive leap towards robots that can learn on the job in environments that aren’t perfectly sterile—from bustling warehouses to disaster zones—without needing a flawless demonstration every single time.